Activation functions transform a linear combination of weights and biases into an output that has the ability to learn part of a complex function at each node of a network. The most basic activation function is the linear one, which is simply a weighted combination of the weights and biases fed into a given node. No matter how many layers or units present in the network, using a linear activation function at each node is nothing more than a standard linear model. However, much of the power of Neural Networks is derived from using nonlinear activation functions at each node.

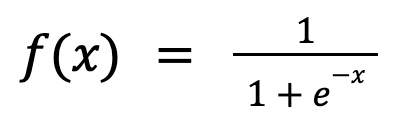

Sigmoid (Logistic) is one such non-linear activation function. The sigmoid function is seen in Logistic Regression and outputs values within the range of [0, 1]. Therefore, it is well-suited for use in the output layer of binary classification, where the output is interpreted as a probability value.